Main section

Certificate Management & PKI for Enterprise and Government: Best Practices for 2026

Three crises at the same time: 47-day certificates, exploding PKI and post-quantum migration. Certificate Lifecycle Management makes your infrastructure future-proof.

Contents

1. Introduction: 3 revolutions unravelling PKI management

2. The challenges in detail: shorter lifespans, wildcard & multidomain, private PKI and MS AD CS, SaaS complexity, PQC and digital sovereignty

3. The 3 pillars of certificate management in 2026

4. Adressing the digital sovereignty challenge

1. Three Revolutions in the PKI World

Three concurrent transformations are hitting your PKI infrastructure in 2026 – they present you with urgent challenges, affect your PKI at scale, and require you to fundamentally rethink it.

First, urgent challenge: Shortening the certificate lifetimes

By 15 March 2029, TLS certificates will expire every 47 days. That means eight to nine times more renewals than today, eight times more frequent certificate exchanges on various endpoints. For organisations managing hundreds of public certificates, this is not only inconvenient – it is an existential operational risk.

The CA/Browser Forum has adopted a binding timeline with Ballot SC-081v3 in April 2025:

-

From 15 March 2026: Maximum validity 200 days

-

From 15 March 2027: Maximum validity 100 days

-

From 15 March 2029: Maximum validity 47 days

You can read more about the background in our blog article on shortening lifespans.

At the same time, the reuse periods are also drastically shortened: By 15 March 2029, domain validation data is only valid for 10 days (instead of 398 days previously), OV owner information for 398 days (instead of 825 days).

Second, broad challenge: The exploding certificate landscape

More and more devices, applications and services require public and, more importantly, private certificates. DevOps and cloud expansion mean more private PKI use cases. Machine identities now outnumber human identities 45:1 in typical enterprises (Gartner 2024). In cloud-native environments, the ratio is even more extreme. This discrepancy is accelerating with the adoption of AI and automation.

Third, fundamental challenge: Post-Quantum Cryptography Migration

NIST IR 8547 from November 2024 states: From 2030, the classic encryption algorithms RSA and ECDSA will be deprecated. From 2035, they will be completely prohibited – including for private certificates with long validity periods of 5 to 10 years.

What's at stake

Even today, we regularly read about incidents in which services lose their protection through encryption and thus fail – with serious business and reputational damage for the organisations responsible. A SwissSign customer survey among DACH-MPKI customers in 2025 revealed that 74% of larger organisations with more than 500 employees have experienced service outages due to expired certificates in the last five years. The major changes that are imminent will drastically increase the risk of such outages:

-

The Bank of England experienced a 91-minute outage of its CHAPS payment system on 31 July 2024 due to an expired certificate. The system processes over £360 billion daily. Another certificate-related incident of 24 minutes followed in February 2025.

-

Alaska Airlines had to ground flights in Seattle in September 2024 because an expired certificate caused an IT failure.

-

SpaceX Starlink suffered a global satellite outage in April 2023 due to an expired ground station certificate.

-

Microsoft Teams was unavailable for 20 million users for three hours in February 2020 – the cause was an expired authentication certificate.

Security breaches weigh even more heavily

Through business losses and trust crises, forgotten or poorly managed certificates create serious security vulnerabilities that attackers can exploit. Expired or misconfigured certificates expose organisations to fraud and unauthorised access to sensitive systems.

-

The 2017 Equifax breach went undetected for 76 days because an expired certificate had disabled traffic inspection. By the time the certificate was renewed and administrators noticed the breach, the attackers had already exfiltrated 147 million personal data records containing social security numbers, birth dates, addresses, and partial credit card information.

Such attacks are also becoming much more likely in the coming years with the three challenges mentioned above.

Webinar on PKI Best Practice 2026

From the gradual reduction of validity periods to the increasing challenges of private certificates and post-quantum cryptography: join our Head of Certificate Services, Alain Favre, for a session on public key infrastructure management best practice in 2026 (in English):

-

Challenges: Reduction of certificate lifespans, PQC, digital sovereignty

-

Three pillars for your PKI management in 2026: discovery, governance, automation

-

Demo of SwissSign's Certificate Lifecycle Management powered by Evertrust

-

Implementation and practical advice

Thursday, 21 May, 11-12am

Our webinar is primarily aimed at organisations in regulated industries such as banking, insurance, the public sector or critical infrastructure, or at companies that work with these organisations.

(By registering for the webinar, you consent to SwissSign AG processing your data for the purpose of contacting you and/or for advertising purposes. More on www.swisssign.com/webinar-privacy.)

2. The challenges

2.1 Why traditional PKI management will fail

The effects of the reduction in the term

Why this change is coming: Shorter lifetimes reduce the window of opportunity for compromised keys to be misused. Rapid revocation becomes less critical as certificates expire on their own. Enforced automation improves the overall security posture for an organisation and also increases agility for the certificate changes needed to equip infrastructure and networks with new quantum-safe encryption algorithms ('crypto-agility').

What that means operationally: Manual processes become impossible.

In addition, organisations must re-validate the ownership of their domains every ten days. However, the CA/Browser Forum has addressed this problem: Ballot SC-088v3 introduces a new 'Persistent DCV' method. Domain owners can set a one-time DNS TXT entry that remains valid over several certificate issuances and is continuously validated by the Certificate Authority. We at SwissSign will inform you about the new method in our CA/B Update Newsletter as soon as we are ready.

The industry is not yet ready: A SwissSign survey shows that 90% of organisations with more than 500 employees still manage certificates at least partially manually. More than 50% have thought about or made concrete plans for preparing for the shorter validity period. Only about half scan their network regularly for certificates.

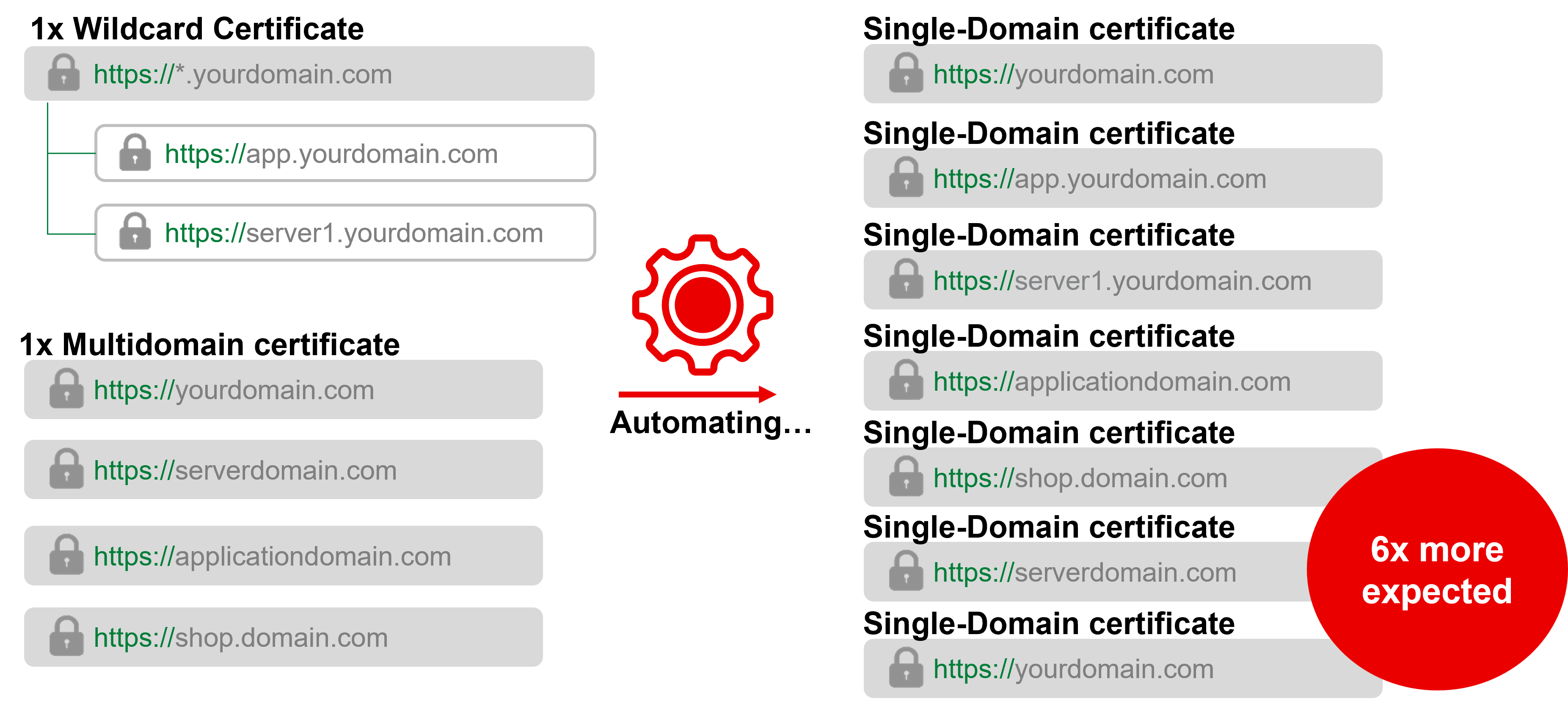

The Wildcard and Multi-Domain Problem

Why Wildcards and Multi-Domain Don't Go Hand-in-Hand with Automation: Many organisations today secure various domain endpoints with a wildcard certificate instead of with individual single-domain certificates. This simplifies operations: organisations only need to renew one certificate, which saves time and costs.

This already poses security risks today: if a wildcard certificate is compromised, all connected applications are at risk. The same applies to multi-domain certificates (SAN), which also bring the BygoneSSL problem with them: if a single domain changes ownership, the new owner can have the entire certificate revoked – and thus bring all other domains to a standstill.

In the future, it will also be the case that automated renewal cannot be realised with wildcard certificates in principle, because automation protocols such as ACME only work per domain – so automation requires dedicated certificates per endpoint. Wildcards also require DNS-01 validation with API access to the DNS provider, while single-domain certificates can use the simpler HTTP-01 validation.

We therefore recommend that organisations only use wildcards for specific reasons and applications:

-

For dynamic DNS: If you constantly create and remove new subdomains, for example for dev environments or customer sandboxes

-

Load Balancers and Reverse Proxies: A wildcard on your edge proxy does not significantly increase the blast radius – a compromise of the proxy still means access to all subdomains

-

For security reasons: If the various contained sub-domains should not be immediately publicly visible, because wildcards hide the ones in public CT logs

To achieve automation, organisations will therefore need to procure significantly more single-domain certificates in the future.

Risk of budget explosion at many Certificate Authorities: Most CAs charge single-domain certificates per SAN listed – depending on the setup, an increase in single-domain certificates therefore leads to significantly higher costs, depending on the CA's pricing model.

The Growing Private-PKI Challenge

Applications that are increasing daily: A private PKI has a lot of flexibility because its certificates are not allowed to be used for the public trust hierarchy of the Internet and therefore are not subject to the rules of the CA/Browser Forum. Private certificates, issued based on an organisation's own root certificate, are needed for WiFi and VPN authentication of users and devices, Microsoft AD user authentication, Citrix and NetScaler for VDI, Intune for MDM and device management, IoT device authentication, internal servers without public access, container and Kubernetes workloads, and service mesh with mTLS.

Why Governance is Important Here Too: Private certificate failures do not make headlines, but they can bring down critical internal systems if not well managed. Shadow PKI occurs when teams set up their own CAs without central visibility. The lack of audit trails also creates a compliance blind spot. Manual revocation of certificates, which is necessary when employees leave or devices are decommissioned, creates opportunities for errors and thus security vulnerabilities. DevOps agility can lead to certificates being left unmanaged, not revoked in a timely manner, and thus creating security risks.

Microsoft AD CS: Still essential, but not sufficient

Current status: Microsoft has integrated PQC support (ML-KEM, ML-DSA) into Windows Server 2025 and Windows 11 via the CNG API in November 2025. For Active Directory Certificate Services (ADCS), PQC support is announced for early 2026 (source).

Benefits of AD CS for Enterprise PKI: A SwissSign survey shows that 80% of larger organisations in the DACH region use AD CS for their private PKI. AD CS offers native Windows integration with group policy-driven automatic enrolment. It is deeply embedded in Windows enterprise environments and is perfect for Windows-centric certificate provisioning. The system is mature and well-known to Windows administrators.

Complementary potential for AD CS for corporations: AD CS issues certificates but does not track the inventory, ownership, or lifecycle of private certificates across your entire infrastructure. Integration with Linux, containers, and cloud workloads is weak. After mergers and acquisitions, there is no ability to consolidate visibility across multiple CAs. Modern protocols such as ACME and EST require additional tools. Schema updates help with post-quantum cryptography, but the algorithm change requires visibility that AD CS does not provide. Permission segregation is very complex to set up.

The reality: AD CS will not disappear. But organisations need additional systems like CLM to manage AD-CS certificates along with public certificates, cloud workloads, and multi-vendor environments.

The enforcement of a strong certificate mapping (Microsoft KB5014754) in February 2025 has added another configuration burden that CLM helps with. Certificates issued through Intune SCEP/PKCS or offline templates now require SID extensions. Organisations still using legacy configurations are experiencing authentication failures in VPN, WiFi and device authentication.

Decentralised Ownership and SaaS Complexity

Typical company situation: IT Operations issues infrastructure certificates. DevOps issues application certificates. Individual business units issue their own certificates for their own applications. There is no central inventory and no unified policies – although the Security Team claims they have all of them.

The SaaS Certificate Problem: Monitor the certificates used by your SaaS provider – including those operated by individual departments – to ensure that critical SaaS systems remain available. Even if the providers are responsible, the business or trust losses fall on you.

Custom domains that you configured on SaaS platforms also require certificates that you manage. API integration certificates for authentication to cloud services are also your responsibility. As are mTLS certificates for secure data exchange with external platforms.

These certificates are outside of your core infrastructure, but can still cause outages if they expire. CLM should also discover and track such integration certificates, not just internal infrastructure – automated and without additional effort for the security team.

2.2 Post-Quantum Cryptography: The Approaching Deadline

The problem

Today's encryption algorithms such as RSA and ECDSA cannot be decrypted with classical computers. Quantum computers will break this encryption. 'Harvest now, decrypt later' attacks are already taking place: Opponents are storing encrypted data for future decryption. New algorithms also require significantly larger keys and signatures.

Impact on infrastructure: Many older devices, IoT and network equipment may not be able to handle these larger certificates. Firmware updates across the entire infrastructure will be required. In certificate-intensive operations, network bandwidth becomes an issue. Certificates with the new algorithms must be tested across the entire infrastructure before they are put into production.

Schedule according to NIST IR 8547 from November 2024: From 2030, RSA, ECDSA, EdDSA, DH and ECDH will be classified as obsolete at the 112-bit security level – according to the US NIST, this will set a global industry standard. 'Obsolete' means: stop using, start migrating, but technically still allowed. This applies to digital signatures according to FIPS 186 and key establishment according to SP 800-56B.

From 2035 onwards, these algorithms are completely forbidden. All RSA certificates must be replaced, including those in private certificates with a 5- to 10-year validity period.

What needs to happen: The industry consensus: Gartner recommends treating 2029 as a practical deadline to avoid security risks and operational issues.

All certificates must be converted to quantum-safe algorithms such as ML-KEM, ML-DSA and SLH-DSA. This requires replacing every single certificate in your infrastructure. Legacy systems must be tested with new algorithms, especially with regard to bandwidth and firmware compatibility. A certificate inventory is a prerequisite for migration planning. The migration as a whole will take years, not months.

Why PQC Readiness Starts with PKI Modernisation: Post-Quantum Cryptography is not a distant topic, but a 2- to 3-year migration project that requires the same skills as managing short-lived certificates:

-

Transparent inventory: Unknown certificates cannot be migrated.

-

Automated deployment: Manual PQC rollout over more than 250 certificates is impossible.

-

Policy enforcement: Hybrid certificates with classical and PQC algorithms must run in parallel during the transition phase, which requires clear rules.

-

Algorithm Agility: The infrastructure must support multiple cryptographic algorithms simultaneously.

-

Large-scale testing: PQC certificates must be validated to ensure they do not damage applications before they go into production.

Conclusion: Organisations that solve the challenges of shortening the lifecycle today will be able to handle the PQC migration of tomorrow. Those that don't will face two simultaneous crises.

2.3 The challenge of digital sovereignty

NIS2 requirements for CAs as a critical supply chain

NIS2, the Network and Information Security Directive 2.0 of the European Union, considers Certificate Authorities as critical supply chain components:

Organisations are legally required to assess 'specific vulnerabilities of each direct supplier'. Using a US CA must explicitly take into account non-EU jurisdiction. For critical infrastructure in the energy, transport, health and public sector, if certificates are revoked or customer data is disclosed due to US legislation, EU fines are threatened. The Commission encourages critical infrastructure to use EU-qualified Trust Service Providers (QTSP) and reserves the right to mandate QTSPs for certain sectors in the future.

DORA requirements for the financial sector

DORA, the EU's Digital Operational Resilience Act, which applies to European financial firms since January 2025, is stricter and treats CAs as 'ICT third-party providers':

Financial institutions must maintain a detailed register of all ICT third-party providers, including CAs. DORA requires the avoidance of 'over-reliance' on a single provider or a single non-EU jurisdiction. Financial institutions must map all subcontractors of their CAs. In November 2025, the European Supervisory Authorities have designated critical ICT third-party providers, with a supervisory guidance published in July 2025.

Sanction and revocation risk

Direct risk: US CAs can be forced to end business relationships with organisations sanctioned by the US government if they are directly put on a 'black list'. For example, according to media reports, International Criminal Court chief prosecutor Karim Khan lost access to his work emails after sanctions by the Trump administration (although Microsoft disputes this). Russian financial and defence organisations lost access to certificates after the 2022 sanctions.

Collateral damage risk: If a part of an organisation inadvertently violates US measures against countries, industries or individuals, the entire entity may be placed on a blacklist, after which US organisations may stop providing their services.

Trust and reputation

Many European and Swiss citizens prefer local solutions for sensitive infrastructure, the issue of 'digital sovereignty' is gaining ground in the public debate in the face of the geopolitical upheavals we are experiencing. This is particularly relevant for organisations that process citizen or customer data.

3. The Three Pillars of Certificate Lifecycle Management

3.1 Discovery: What you can't see, you can't manage

What it is: Comprehensive scanning of the entire infrastructure to build a complete certificate inventory.

Discovery methods: Active probing and network discovery includes HTTPS, LDAPs and other ports across network domains. Local client discovery means file system and certificate store inspection on servers, workstations and mobile devices. Third-party imports are API and integration-based from cloud services such as AWS, Azure and GCP, load balancers such as F5 and other applications. Certificate-Transparency monitoring enables real-time observation of publicly issued certificates.

How you implement it: Schedule scan campaigns, weekly or daily for critical segments. Deploy small lightweight agents on relevant endpoints for continuous visibility. Integrate with cloud platforms and infrastructure tools. Connect your Certificate Lifecycle Management to MDM platforms such as Intune, Jamf and Workspace ONE.

Why it's important: You cannot automate the renewal of unknown certificates. Compliance requires audit trails for all cryptographic assets. A risk assessment and a comprehensive security posture is impossible without a complete inventory. PQC migration planning requires knowledge of every certificate and its algorithm. Comprehensive discovery also allows you to identify unauthorised CAs and self-signed certificates – the shadow PKI.

Ideal image: A real-time inventory with full lifecycle tracking shows when each certificate was discovered, issued and renewed, and by which method. Immediate identification of weak algorithms, expired certificates and policy violations is possible. Certificate quality is rated based on NIST, ANSSI and CA/B Forum standards. Centralised monitoring with owner accountability and proactive alerts before critical certificates expire round out the picture.

3.2 Governance: Policies that scale

What it is: Establishing and enforcing policies for certificate usage across the organisation: who can request which certificates, with which trust levels and algorithms, through which workflows, who must authorise which certificates.

Central governance elements:

-

Define approved Certificate Authorities and certificate trust levels per use case. For example: SwissSign OV TLS for all publicly accessible domains, SwissSign Private Certificates for internal systems.

-

Establish cryptographic standards: key length, algorithms, and validity for each certificate type.

-

Establish naming conventions and metadata requirements.

-

Define approval workflows: Who can request what, with what approvals.

-

Assign ownership at the business unit, team, or individual level.

-

Create replacement workflows: If a user is removed from a group, the certificate is locked.

-

Set renewal time guidelines, for example 5 days before expiration, not at the last minute.

-

Define Incident Response: What happens in the event of a certificate-related failure?

Integration for policy enforcement: Your Certificate Lifecycle Management should be integrated with the following systems to achieve its full effect: ITSM and ticketing systems like ServiceNow and Jira allow you to embed certificate policies directly into their existing workflows. CMDB provides asset correlation and owner mapping. SIEM systems like Splunk and QRadar enable security event forwarding. Active Directory enables group-based policy enforcement and automatic lockout when users or devices are removed from groups. SCIM automates user and group synchronisation. Identity Providers with OpenID Connect control CLM access.

Ideal image: Business or IT users can order their own certificates – with the appropriate security level. Non-compliant certificate requests are automatically blocked with clear explanations. Each certificate is therefore clearly assigned to an owner, department or purpose and offers the appropriate level of trust. Digitally signed audit logs are tamper-proof and offer forensic detail levels. Automated compliance reports are delivered to the relevant people in the organisation on a scheduled basis.

3.3 Automation: Making 47-Day Certificates Possible

What it is: End-to-end certificate operations – issuance, renewal, provisioning, revocation – without manual intervention.

What are agents? Agents are small software components that are installed on servers or workstations and communicate with your CLM platform. They discover certificates in local storage and file systems. They automatically request and install new certificates. They handle timely renewal before the certificate expires and report the status back to the central dashboard. They translate between modern CLM APIs and legacy protocols that your devices already speak. The agent typically communicates natively over HTTPS with the central CLM.

Agents are often the best option because they provide an easy way to establish automation on legacy systems.

What maturity looks like: Planned certificate renewals are based on your governance and policies. Automatic deployment to all endpoints is done without service interruption. Mass operations for mass revocations or policy updates are easily possible. Native integrations with cloud key vaults such as Azure Key Vault, AWS Certificate Manager and Google Certificate Manager make certificate management in the cloud easy. Modern DevOps tools such as Ansible, Kubernetes cert-manager and Terraform are supported to keep your DevOps agile and secure.

4. Addressing the Sovereignty Challenge

Choosing European Suppliers

For regulated industries in the DACH region, choosing a European Certificate Authority and a local CLM provider supports NIS2 and DORA supplier evaluation requirements, data residency concerns, and mitigates geopolitical risks.

Mitigating Geopolitical Risk: For regulated industries in the DACH region, this is a strategic decision.

EU-based CAs are subject to EU law and offer GDPR alignment as well as reduced sanctions risk within European operations – if a US government sanctions your organisation or parts of your organisation, European CAs do not have to stop their services for you.

Swiss Certificate Authorities enjoy the perception of traditional Swiss neutrality with their Swiss jurisdiction, which may be advantageous in geopolitical disputes.

Switzerland's status as a 'safe third country' for data protection, combined with ETSI and eIDAS compliance, offers European organisations both sovereignty and regulatory compatibility.

Regardless of your choice: document the jurisdiction of your CA and the sanction risk as part of your NIS2/DORA third-party risk assessment.

For a detailed CA comparison: See our analysis 'Trusted Certification Authorities for Germany, Switzerland and Austria'.

5. How to Get There: Modernising Your PKI for 2026

Building the business case internally

Strategic classification: Certificate failures pose a risk to your business continuity: Quantify past incidents, if possible, to make their consequences tangible. Cite compliance requirements such as NIS2 and DORA for the financial sector, which require proper third-party management. Mention the 47-day deadline. The question is when you start automating, not if. March 2026 with a maximum of 200 days is the first milestone to tackle.

TCO analysis considerations: Roughly calculate your current costs: Manual hours for certificate management times hourly rate times number of renewals. Calculate your future situation without automation: The same calculation with eight times the renewal frequency. Certificate-related downtime already costs companies a lot of money today and compromise the security and operability of your business, provide known examples as described above in the article. Compare: CLM investment versus risk of continued manual management.

Single-domain certificates are required for automation, carefully compare pricing models – if you can only use single-domain certificates in the future, this can cost significantly more depending on the CA.

Evaluate providers

CA selection criteria: Technical capabilities are similar among all major CAs. Regarding digital sovereignty and support quality, you need to pay attention to the locations of headquarters, contacts, and data.

In addition, there are the pricing models, for example: 9 single-domain certificates for 9 application layers elsewhere mean 9 times the cost. At SwissSign, the same scenario costs about 3 times as much, as we bill per owner, not per serial number. Read our detailed comparison of Certificate Authorities for regulated organisations in the DACH region.

CLM Selection Criteria: Discovery capabilities for network, cloud, endpoints and third-party integrations are crucial, with protocol support needed for ACME, SCEP, EST, WCCE and agents. Cloud connectors for AWS, Azure and GCP are required. MDM connectors for Intune, Jamf and others via SCEP are important.

The entire integration ecosystem must fit your stack: AD, Intune, F5, Kubernetes, SIEM, ITSM, CMDB. Multi-CA support allows the management of certificates from all issuers in one place. European sovereignty is particularly important for regulated industries. Deployment flexibility for on-premises, cloud, or hybrid should be available.

Consider Proof of Concept

Simple POCs (usually free): One to two use cases in a test environment. You can use your own infrastructure, for example a Windows machine: install the agent, scan, demonstrate the replacement workflow. This shows core capabilities without commitment.

Complex POCs (may be chargeable): Including Intune, Azure and complex AD environments. Requires individual tenant configuration, which takes days. The configuration is exportable from good providers such as SwissSign and Evertrust and can therefore be easily transferred to your production environment. This reduces deployment time and costs for the final implementation.

Cloud versus On-Premises CLM Decision

When Cloud-CLM is useful

When infrastructure complexity is limited, only public certificates are managed, and there is no need for deep AD or F5 integration. When ACME and API-based workflows are used and do not require privileged access. When cost-efficiency is important and on-premises infrastructure does not need to be maintained.

When On-Premises is useful

The integration problem with cloud CLM: Cloud CLM needs to reach internal systems, connect to Active Directory via LDAP or LDAPS for user synchronisation. Reach F5 Load Balancers for certificate provisioning, install internal certificate agents on servers.

How does Cloud-CLM reach these systems? The options are limited. You could connect via the internet, but your security team would likely be very critical of this. VPN tunnels: Do you want a permanent VPN connection between the CLM provider and your internal systems? This means that an external provider has 24/7 network access to critical systems. This is difficult for compliance reasons, and your auditors would certainly ask questions.

The Credential Problem with Cloud-CLM: Cloud-CLM requires privileged access. For F5, that means admin credentials for certificate provisioning. For Intune: a privileged account to push certificates. For AD: a service account with write permissions. SSH keys for Linux servers. API tokens for Kubernetes clusters. All these accesses would need to be on a SaaS platform – which many organisations are uncomfortable with for security reasons.

Advantages of an on-premise deployment: CLM runs within your network perimeter, there is direct LDAP access without VPN. The necessary admin credentials never leave your infrastructure. This approach complies with the Zero-Trust principles and ensures full data sovereignty, especially in combination with Swiss certificates.

The threshold: From around 5,000 certificates, an on-premises deployment typically becomes more cost-efficient and operationally more sensible for regulated organisations.

6. Conclusion and Call to Action

Summary

The convergence of three major PKI world disruptions – 47-day certificate lifetimes, expanding private PKI, and the coming PQC migration – is forcing organisations to automate their certificate management. Organisations that act now win:

-

Operational Resilience: Preventing Certificate Failures Before They Happen

-

PQC Foundation: Building Agility for Quantum Migration

-

Compliance: Full digital sovereignty with the right CA and CLM providers such as SwissSign and Evertrust

-

Cost control: Avoid budget explosion due to increased renewal frequency with the right CA

-

Competitive advantage: Operate smoothly while others improvise

Your next steps

First: Start with visibility. You can't automate what you can't see. Discovery is the first step. Know what certificates you have, where they are, when they expire, and who owns them.

Second: Evaluate your CA relationship and choose a CLM provider. Consider sovereignty, pricing model (critical for automation economics), and support quality – not just technical features. For your CLM provider, modern discovery methods and good usability for business users are also important. For many large organisations, the integration ecosystem that must fit with your infrastructure is also crucial.

Thirdly: It's STARTING NOW. The first deadline for a TLS/SSL lifetime of a maximum of 200 days of validity is in March 2026. Start now – on the right track for digital sovereignty.